Boost content engagement using feedback loops in 2026

TL;DR:

- Focusing on user needs through continuous feedback improves content relevance and effectiveness.

- Implementing a four-stage feedback loop helps systematically gather, analyze, act, and measure content improvements.

- AI tools enable scalable feedback analysis, guiding small teams to make data-driven decisions that drive growth.

Most content teams keep asking the same wrong question: "How do we publish more?" The real question is "What do our users actually need?" The gap between those two questions costs businesses real money, real traffic, and real audience trust. When you shift focus from output volume to systematic feedback, something clicks. Content becomes sharper, more relevant, and measurably more effective. This guide breaks down exactly how feedback loops work, which methods cut through the noise, and how AI tools make the whole process scalable for lean marketing teams trying to grow without burning through their budget.

Table of Contents

- Why feedback is essential for content success

- The feedback loop framework for content teams

- Types of feedback: Engagement signals vs. direct surveys

- How AI and automation shape feedback and content improvement

- Turning feedback insights into real growth opportunities

- Our take: Why great feedback beats more content every time

- Next steps: Automate feedback-driven growth

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Feedback fuels better content | Actively listening to users, not just producing more content, leads to superior engagement and results. |

| Blend qualitative and quantitative | Using both surveys and analytics reveals deeper insights than depending on one alone. |

| AI boosts speed and consistency | AI-powered feedback frameworks let small teams analyze and improve content more efficiently. |

| Operationalize for real impact | Transforming raw feedback into prioritized actions drives measurable business growth. |

Why feedback is essential for content success

Publishing more content without listening to your audience is like opening new restaurant locations before fixing the menu. You scale the wrong thing. Feedback is what closes the distance between what your team thinks users want and what users actually respond to.

The business case for feedback is not abstract. 38.3% of businesses directly link feedback to revenue growth, and 23.3% see measurable satisfaction jumps when they build structured feedback practices. That is not a small margin. Those numbers represent real revenue that better-listening teams capture and output-obsessed teams leave on the table.

Here is what happens when teams ignore feedback and just keep producing:

- Engagement dips because content misses what users care about at each stage of their journey

- Retention suffers because readers do not feel like the content was made for them

- Resources get wasted on topics that generate traffic but do not convert or retain

- Competitors who do listen pull ahead by publishing fewer but more targeted pieces

Good content engagement strategies always start with listening, not with a content calendar. The calendar comes after you understand what your audience is actively searching for, frustrated by, or missing entirely.

"Feedback is not a one-time survey. It is the continuous signal that tells you whether your content strategy is working or just working hard."

The teams that win consistently treat feedback as infrastructure, not as a quarterly exercise. They build systems that collect signals constantly, analyze them without delay, and feed those insights directly back into the content creation process. That cycle is what separates brands that grow steadily from brands that plateau and wonder why. To see how feedback loop best practices translate into specific marketing actions, you need a proven framework first.

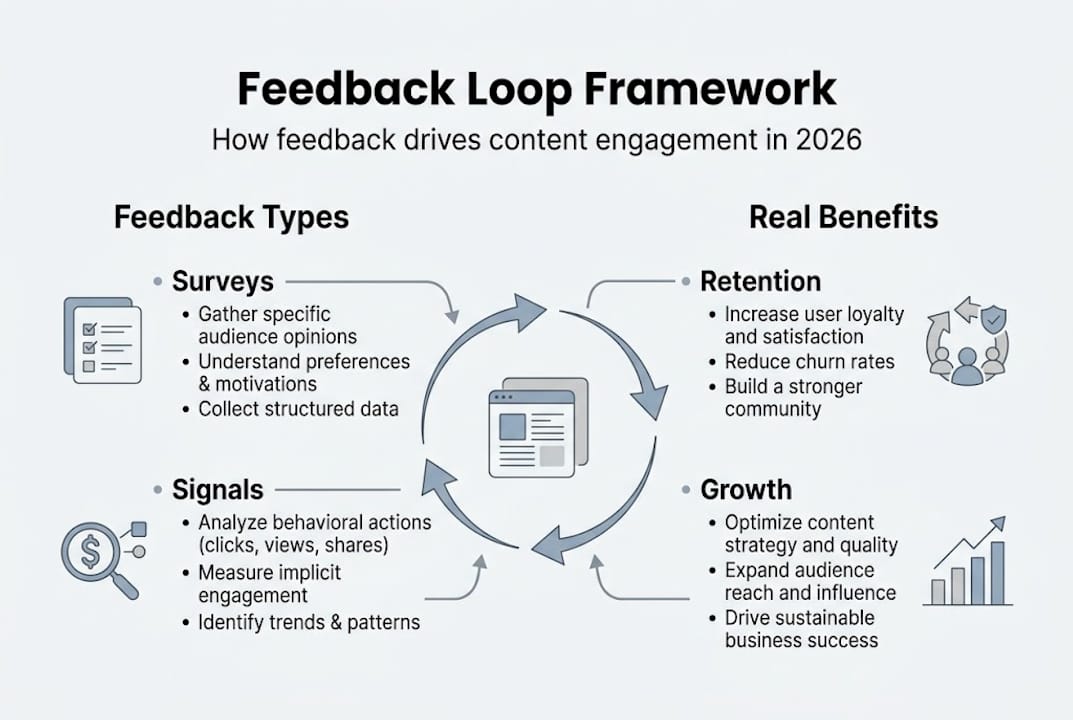

The feedback loop framework for content teams

A feedback loop is not magic. It is a four-stage cycle that keeps content improving with every iteration. When you run this cycle consistently, you stop guessing and start building on evidence.

The four stages:

- Collect feedback from every available channel: on-page surveys, email responses, social comments, support tickets, review platforms, and behavioral data like scroll depth and time on page

- Analyze what you collected to find patterns, recurring complaints, content gaps, and high-performing topics worth expanding

- Implement changes based on your analysis, whether that means rewriting an underperforming article, creating a new piece that answers a common question, or restructuring a landing page

- Measure the results of your changes, comparing pre and post metrics to confirm whether the iteration actually improved performance

The key word is continuous. Centralize feedback from multiple sources and tie it to business goals. One-off feedback exercises produce one-off insights. What you need is a living system.

Here is a quick comparison of the three most common feedback sources and how they perform across key criteria:

| Feedback source | Speed | Depth | Best for |

|---|---|---|---|

| User surveys | Medium | High | Retention, satisfaction, intent |

| Engagement analytics | Fast | Low to medium | Performance signals, UX issues |

| Social listening | Medium | Medium | Brand sentiment, trending topics |

Pro Tip: Do not store feedback in separate spreadsheets or tools that do not talk to each other. Centralize everything in one platform or dashboard so your whole team sees the same picture. Disconnected data leads to disconnected content decisions.

Connecting this process to your strategic marketing planning is what turns a feedback loop into a growth engine. Without that connection, feedback becomes an audit exercise instead of a direction-setter. Once you have the loop structure clear, the next question is which types of feedback signals are actually worth trusting.

Types of feedback: Engagement signals vs. direct surveys

Not all feedback is equal. Some signals are fast but shallow. Others are slow but deeply predictive of long-term results. Knowing when to use each type is a competitive advantage.

Quantitative feedback (engagement signals):

- Page views, session duration, scroll depth

- Click-through rates on CTAs and internal links

- Bounce rates and exit pages

- Conversion rates by content type

These numbers are easy to collect and fast to act on. If a blog post has a 90% bounce rate, that is a clear signal something is wrong, whether it is the headline, the content match, or the page load speed. Engagement analytics work best for catching obvious performance failures and validating quick iterations.

Qualitative feedback (direct surveys and open responses):

- Net Promoter Score (NPS) surveys asking how likely someone is to recommend your content

- Exit intent surveys asking why a visitor is leaving

- Post-purchase or post-download feedback forms

- Open comment boxes at the bottom of articles

Here is the critical difference: engagement signals are noisy, while direct surveys drive long-term retention and can outperform signals, especially for low-signal users who quietly churn without triggering any alert in your analytics.

A user who spends 30 seconds on a page and leaves might be someone who found exactly what they needed instantly, or someone who gave up because the content was confusing. Engagement data alone cannot tell you which. A short, well-placed survey can.

For engaging content impact that compounds over time, you need both. Use analytics to catch red flags fast, and use surveys to understand why those red flags exist. The combination is far more powerful than either method alone.

Practical scenarios for small and medium businesses:

- Launching a new content series: Use a survey after the first three pieces to gauge whether the direction matches audience expectations

- Diagnosing a traffic drop: Start with analytics to isolate which pages are losing ground, then add a short survey to those pages to collect qualitative context

- Improving email open rates: Survey your list directly asking what topics they want to see more of, rather than guessing from click data

How AI and automation shape feedback and content improvement

Once you understand what types of feedback to collect, the challenge becomes scale. A team of two or three marketers cannot manually read 500 survey responses every week and also produce content. This is where AI tools stop being a nice-to-have and become essential.

Modern AI text analytics tools can process hundreds of feedback responses in minutes, automatically grouping them into themes like "navigation confusion," "topic requests," or "format complaints." Instead of spending hours reading and categorizing, your team sees a ranked list of what users are saying most and what matters most to them.

The performance data backs this up. AI-assisted posts get higher median engagement at 5.87% versus 4.82% for non-AI posts, and teams using AI can sustain much higher content volumes without sacrificing consistency. That analysis came from a study of 1.2 million posts, so this is not a small sample.

Key benefits of AI in the feedback and content improvement cycle:

- Sentiment analysis that automatically flags negative feedback spikes before they become larger problems

- Topic clustering that identifies which audience questions are most common and underserved

- Competitor content gap analysis that combines external data with your own feedback signals

- Automated reporting that keeps the whole team aligned without manual data pulls

Pro Tip: Use AI to centralize and surface signals, but always bring a human strategist to interpret the why behind the data. AI can tell you that 40% of survey respondents want shorter articles, but a human needs to decide whether that reflects genuine preference or a landing page formatting problem.

Building this into an AI-powered content workflow means your team spends more time on strategy and less on data wrangling. The brands that scale feedback effectively are not the ones with the biggest teams; they are the ones with the best systems. AI also strengthens AI content for brand loyalty by making content feel consistently relevant rather than generic.

Turning feedback insights into real growth opportunities

Collecting and analyzing feedback is only valuable if you turn insights into action. This is where many teams stall. They gather the data, nod at the themes, and then go back to their existing content calendar. That is not a feedback loop. That is feedback theater.

Here is a step-by-step approach for operationalizing feedback:

- Run a content audit using your feedback data to identify which existing pieces are underperforming and why

- Map each feedback theme to a specific action, whether that is a rewrite, a new article, a format change, or a topic addition

- Rank actions by business impact, focusing first on changes that affect high-traffic pages or content tied to conversion goals

- Run A/B tests on your highest-leverage changes to confirm they actually improve performance before scaling

- Track results over 30, 60, and 90 days, comparing engagement, retention, and conversion rates before and after

Campaign improvements that consistently come from well-run feedback loops include:

- Repurposing high-engagement blog posts into video scripts or email sequences based on user demand

- Launching new topic clusters directly from the most common questions in your survey responses

- Fixing misleading titles that attract traffic but disappoint readers (a major bounce rate driver)

- Adding FAQ sections based on support ticket themes, which also benefits SEO

Linking all of this back to revenue is critical for justifying the investment in feedback infrastructure. Content marketing examples show that teams who connect feedback to specific business outcomes, such as lower churn, higher conversion, or increased organic reach, consistently get more internal buy-in and more resources to keep improving.

Our take: Why great feedback beats more content every time

We have worked with enough content teams to say this plainly: the "just publish more" mindset is one of the most expensive habits in modern marketing. It feels productive. It produces output. But output without direction is noise.

The uncomfortable truth is that most small and medium businesses are sitting on a goldmine of feedback they are not using. Support tickets, survey responses, social comments, review platforms. All of it is right there. But because it takes effort to centralize and analyze, it sits ignored while the team brainstorms new blog topics based on gut instinct.

There is also the problem of feedback bias. Teams tend to act on the loudest feedback, not the most representative. One complaint from a vocal user becomes a product pivot while silent majority needs go unaddressed. This is where linking your analytics and ROI practices to feedback interpretation protects you from expensive overcorrections.

Our editorial belief: one targeted content iteration, informed by real user signals, outperforms ten new generic articles every single time. The teams that grow fastest are not publishing the most. They are listening the best.

Next steps: Automate feedback-driven growth

Turning feedback into a repeatable, scalable growth system is exactly what the right toolset makes possible. Manual feedback collection and analysis creates a ceiling for small teams. Automation removes it.

Babylovegrowth.ai's SEO automation platform is built to help content teams do exactly this: collect content signals, optimize continuously, and scale output without losing quality or relevance. Paired with our backlink building software and organic traffic tool, you get a full-stack system for translating feedback into search visibility and measurable growth. If your team is ready to move from reactive publishing to feedback-driven momentum, this is the infrastructure that makes it happen.

Frequently asked questions

How do I decide which feedback method is best for my content?

Combine engagement analytics with direct surveys. Use surveys for retention, especially when users are quietly disengaging, and use analytics for quick performance signals and identifying obvious technical or UX problems.

How can small teams act on feedback without more resources?

Centralizing feedback with AI tools and ranking changes by business impact lets lean teams start with high-priority fixes first. Continual small improvements consistently outperform waiting for a full overhaul.

Are engagement signals like time on page enough to measure content quality?

No. Engagement signals are useful but often misleading. Direct surveys outperform signals for predicting satisfaction and long-term retention, especially for users who disengage without leaving any obvious behavioral trace.

What tools help automate feedback collection and analysis?

AI-powered text analytics and centralization platforms quickly surface recurring themes across large volumes of feedback. AI and text analytics make it practical for small teams to process feedback at a scale that would otherwise require a full research department.

How do feedback-driven changes affect SEO?

Directly and significantly. Content audits and feedback close keyword and topic gaps, improve on-page engagement metrics that Google watches, and lead to content iterations that convert better, all of which compound into stronger organic rankings over time.

Recommended

Smart SEO,

Faster Growth!

Most Read Articles

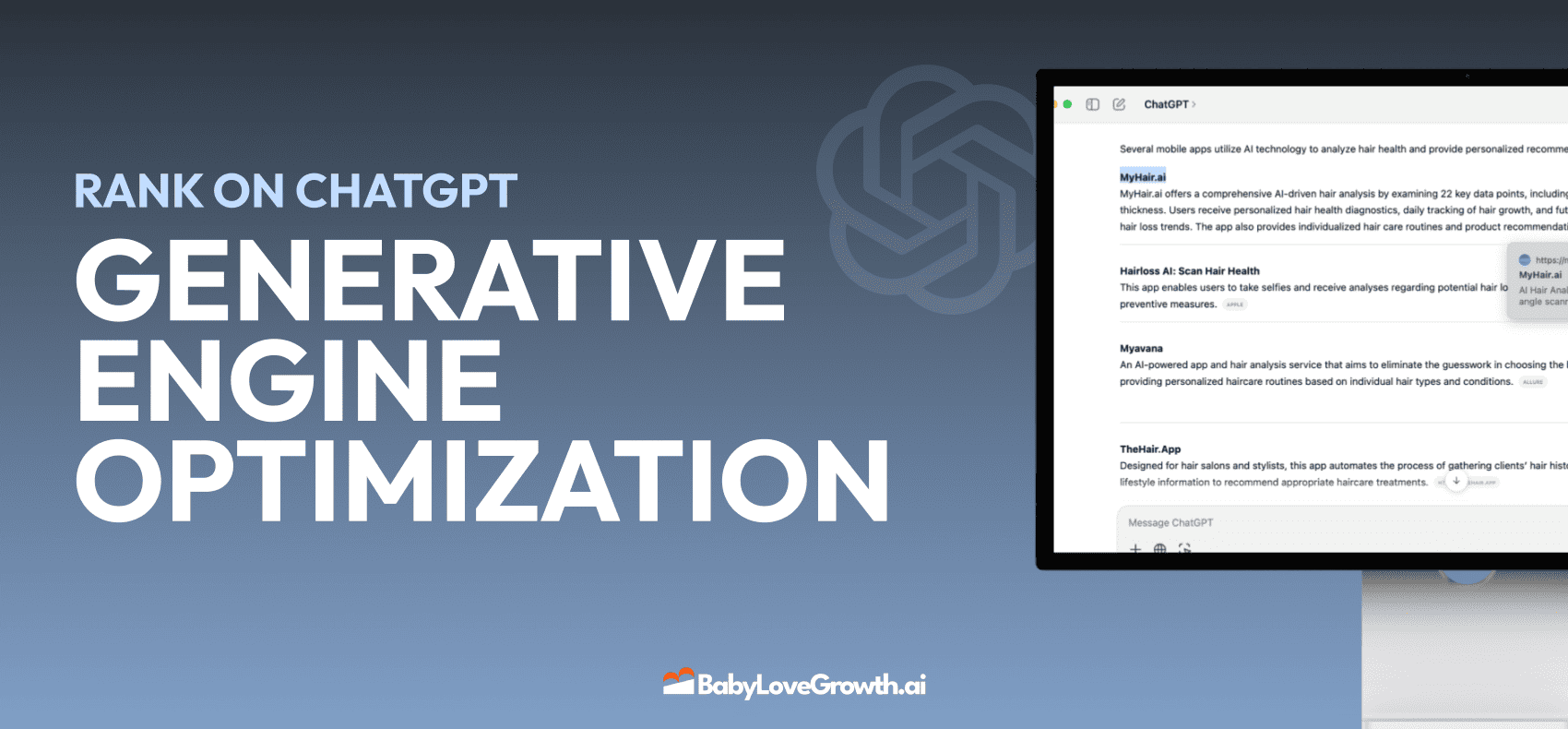

Generative Engine Optimization (GEO)

Learn how Generative Engine Optimization (GEO) helps your content rank in AI search engines like ChatGPT and Google AI. This comprehensive guide explains the differences between SEO and GEO, why it matters for your business, and practical steps to implement GEO strategies for better visibility in AI-generated responses.

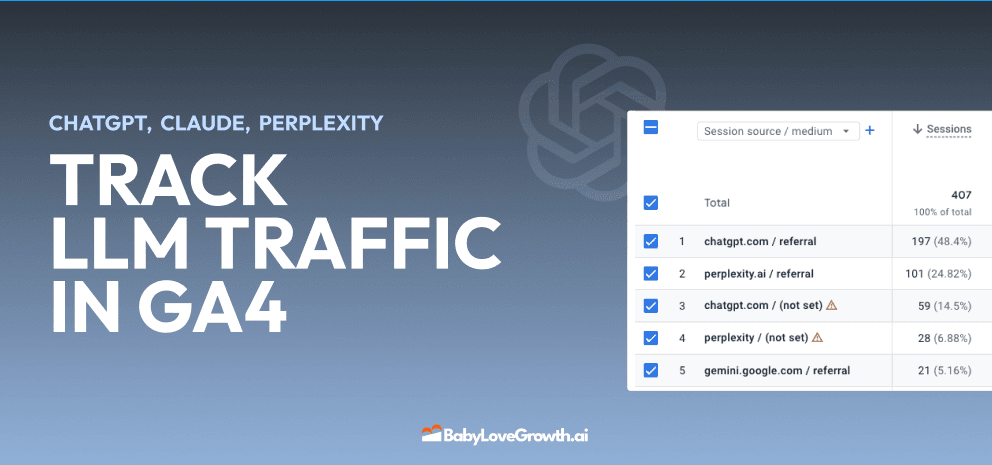

Track LLM Traffic in Google Analytics 4 (GA4)

Learn how to track and analyze traffic from AI sources like ChatGPT, Claude, Perplexity, and Google Gemini in Google Analytics 4. This step-by-step guide shows you how to set up custom filters to monitor AI-driven traffic and make data-driven decisions for your content strategy.

How to Humanize AI Text with Instructions

Learn practical techniques to make AI-generated content sound more natural and human. This guide covers active voice, direct addressing, concise writing, and other proven strategies to transform robotic text into engaging content.

Moz DA vs Ahrefs DR: Which Metric Should You Actually Use?

Moz Domain Authority and Ahrefs Domain Rating look similar but measure very different things. Learn what each score actually tells you, when to use each one, and what to track instead for real SEO results in 2026.

Open AI Revenue and Statistics (2024)

Comprehensive analysis of OpenAI financial performance, user engagement, and market position in 2023. Discover key statistics including $20B valuation, $1B projected revenue, and 100M+ monthly active users.

"Search Google or Type a URL": What It Means & What to Do

Learn what "Search Google or type a URL" actually means, how your browser decides whether to search or navigate, and what to do if your address bar starts behaving unexpectedly. Includes browser settings guides for Chrome, Firefox, Edge, and Safari.